Apache flume2/25/2024

You will need to change it so that you are binding the rabbitmq source to the sink. # Bind the source and sink to the channel _channel.checkpointDir = /var/flume/checkpoint # Use a channel which buffers events in memory

Here is my entire nf, am I missing anything? To do some analysis on this data you can now follow steps 5 and 6 from the original tutorial. You should now be able to browse this table from the web interface. Hcat -e “CREATE TABLE firewall_logs (time STRING, ip STRING, country STRING, status STRING) ROW FORMAT DELIMITED FIELDS TERMINATED BY ‘|’ LOCATION ‘/flume/rab_events’ ” The following command (from the original tutorial) can be used to create the HCatalog table (make sure you only enter it only on a single line): The incoming messages are the ones being generated by the Python script and the deliver / get and ack messages are the ones being consumed by Flume.

You can see that everything is running by going over the RabbitMQ Management console. When this is started the script will declare an exchange and queue and then start publishing log entries. You can start the script by running: python.exe c:\path\to\generate_logs.py You will need to make sure that the virtual host exists.

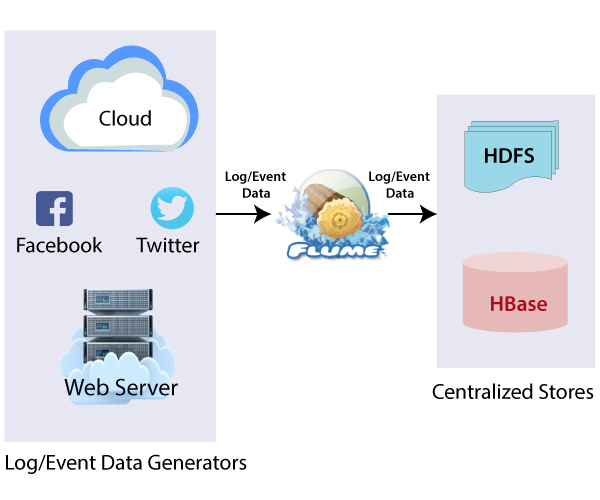

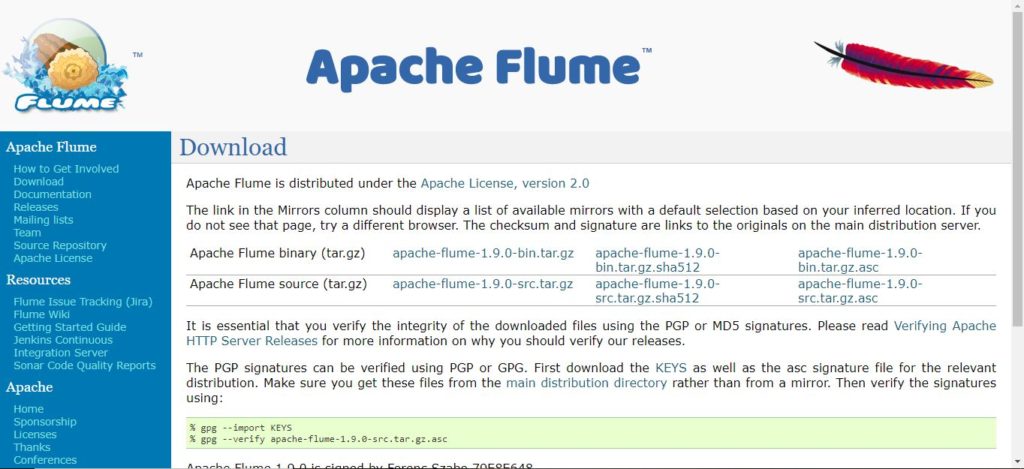

The script is setup to connect to a broker on the localhost into a virtual host called “logs”. To run the python script you will need to follow the instructions on the RabbitMQ site to install the pika client library (see details on the RabbitMQ website). To generate log entries I took the original python script (which appended entries to the end of a log file), and modified it to publish log entries to RabbitMQ. Starting Flumeįrom the Sandbox console, execute the following command flume-ng agent -c /etc/flume/conf -f /etc/flume/conf/nf -n sandbox Generate server logs into RabbitMQ Once these directories have been created, upload the file into the lib directory. Mkdir /usr/lib/flume/plugins.d/flume-rabbitmq/lib Mkdir /usr/lib/flume/plugins.d/flume-rabbitmq You shouldn’t need to change anything else.įor Flume to be able to consume from a RabbitMQ queue I created a new plugins directory and then upload the Flume-ng RabbitMQ library.Ĭreating the required directories can be done from the Sandbox console with the following command: mkdir /usr/lib/flume/plugins.d Before uploading the file, you should check that the RabbitMQ configuration matches your system: _source1.hostname = 192.168.56.65 Using the nf file that is part of my tutorial files, follow the instructions to upload it into the sandbox from the tutorial. You should now see the installation progressing until it says Complete!įor more details on installation take a look at Tutorial 12 from Hortonworks. You can login using the default credentials: login: rootĪfter you’ve logged in type: yum install -y flume WIth the Sandbox up and running, press Alt and F5 to bring up the login screen. Have a RabbitMQ broker running, and accessible to the sandbox.Download and configure the Hortonworks Sandbox.Generating fake server logs into RabbitMQ.Installation and Configuration of Flume.In this article I will cover off the following: …continue reading about Apache Flume over on the Hortonworks website. It has a simple and flexible architecture based on streaming data flows and is robust and fault tolerant with tunable reliability mechanisms for failover and recovery. In this post I’m going to further extend the original tutorial to show how to use Apache Flume to read log entries from a RabbitMQ queue.Īpache Flume is described by the folk at Hortonworks as:Īpache™ Flume is a distributed, reliable, and available service for efficiently collecting, aggregating, and moving large amounts of streaming data into the Hadoop Distributed File System (HDFS). One of the problems that I see with the Hortonworks sandbox tutorials (and don’t get me wrong, I think they are great) is the assumption that you already have data loaded into your cluster, or they demonstrate an unrealistic way of loading data into your cluster – uploading a csv file through your web browser. One of the exceptions to this is tutorial 12, which shows how to use Apache Flume to monitor a log file and insert the contents into HDFS. This post is an extension of Tutorial 12 from Hortonworks ( original here), which shows how to use Apache Flume to consume entries from a log file and put them into HDFS.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed